What Insurers Can Learn From Tech Giants

Data

The competitive advantage of the world’s leading tech companies resides in the way they use data. Whether you’re searching the web for an answer to an obscure question, or you’re seeing ads for the exact product you’ve been thinking of buying, technology’s ability to anticipate our needs is becoming astoundingly accurate.

Imagine if insurance companies could get this good at knowing who potential customers are, even at predicting a customer’s behavior and lifetime value. If insurers could apply big data and artificial intelligence as the leading tech companies, they could know right away which individuals are in their target customer segment, well before the bureaucracy of filed ratings and underwriting guidelines.

In the insurance industry, successes are built on the ability to forecast risk. And, now the industry has the opportunity to apply advanced data science to create the new frontier of risk forecasting, customer experience design and loss predictions. In fact, it is an imperative. So what is holding them back?

Insurance companies have a long history of struggling to predict what are known as unpriceable risks. This small percentage of every insurance company’s book of business ends up having a large impact on the central metric of their profitability–the loss ratio. Whether these unpriceable risks are fraudsters, litigious insureds, or people who generate high dollar claims, these are the risks that historically have been both the most difficult to predict. Since most insurance companies are not leveraging the same data and artificial intelligence capabilities to identify these risk profiles before the individual becomes a customer, they must take reactionary measures, where possible, such as non-renewal once it’s too late– the loss has occurred.

This is not a new problem, and the industry has tried to solve it in a variety of ways. In the 1990s, insurance companies began to introduce credit-based insurance scores into rating plans. To date, there had been no single factor that existed that was as highly correlated to predicting loss frequency and severity. Considering an applicant’s credit history was, at the time, the best available piece of information that could help the insurer to understand an individual’s behaviors and level of conscientiousness. The hypothesis, which they were able to prove in actuarial filings, was that the higher a person’s credit score, the less likely they would be to file a claim.

However, credit-based insurance scoring may not be here to stay. According to the Insurance Information Institute, some have suggested that the use of insurance scores might unfairly discriminate against certain demographic or economically disadvantaged groups. States such as California, Massachusetts, Hawaii and Maryland place restrictions on the use of credit, and more states are considering moving in a similar direction.

In his book The Four: The Hidden DNA of Amazon, Apple, Facebook, and Google, New York University professor and tech thought leader Scott Galloway cited that between 2010 and 2015, there were 13 companies that managed to outperform the S&P 500 each year. And when we take a look at this elite group, we find that the majority of these businesses are algorithmically driven. These companies take in data constantly and use this data in real time to update the user-experience. A research report from Accenture found that artificial intelligence has the potential to increase corporate profitability in 16 industries by an average of 38% by the year 2035. Kate Smaje, a senior partner at McKinsey and global co-leader of McKinsey Digital said, “Data is providing the fuel to power better and faster decisions. High-performing organizations are three times more likely than others to say their data and analytics initiatives have contributed at least 20 percent to EBIT.” It is hard to deny that success in our respective businesses is a function of how well we make use of the data available to us.

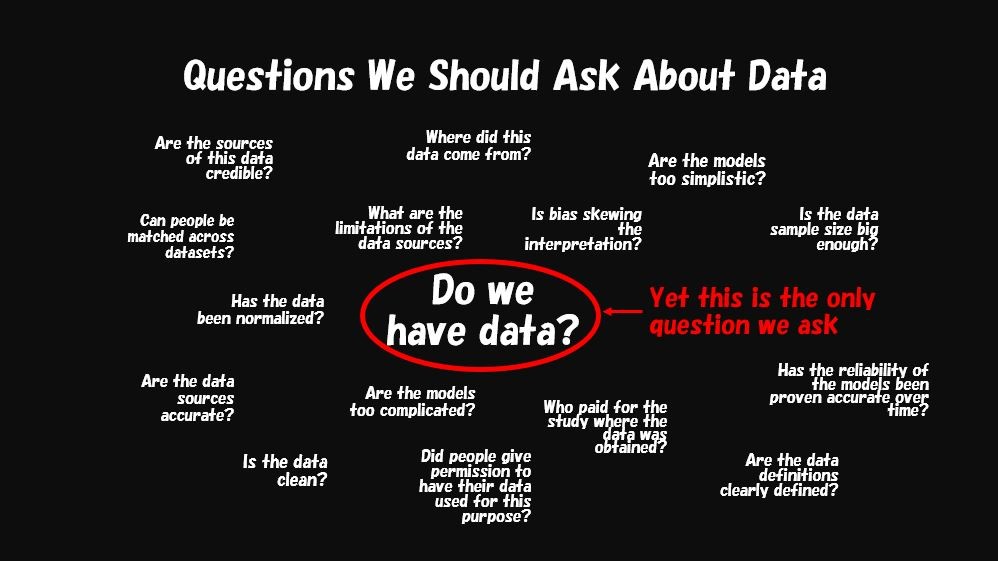

Now that we are entering a new era of advanced predictive capabilities, the future can be predicted earlier and with incredible accuracy. So back to the original question: What is holding insurers back from leveraging these advancements?

The first thing holding insurers back is history and culture. The insurance industry has been slow adopters, but fast followers. Although some insurers are already using data to better understand and segment risk profiles of an individual — wide adoption and significant impact remains elusive. According to McKinsey, for Property and Casualty insurers who are leveraging big data and AI “leading insurers can see loss ratios improve three to five points, new business premiums increase 10 to 15 percent, and retention in profitable segments jump 5 to 10 percent.” And, the message from every one of the big 4 consulting firms on the biggest opportunities in the insurance industry today is that the new normal for insurance companies is to leverage sophisticated big data, and to use advanced AI to harness the predictive power of this data.

However, leveraging the power of advanced AI and sophisticated data sets is difficult to do. The guidance and trends are clear here as well, engaging with partners that can deliver immediate value is a way to deliver bottom-line results and escape “pilot traps”. This is where InsurTechs and insurance partners like Pinpoint Predictive excel.

Behavioral economics pertains to Pinpoint’s proprietary, first-party data, which correlates with insurance outcomes and also reveals the explainable features underlying risk-related behaviors. Pinpoint’s platform leverages behavioral economics and deep learning, providing leading insurers with superior risk-selection scores at the beginning of the customer journey, as well as a deeper understanding of individual-level risks. Whereas traditional risk segmentation puts people into blanket categories that can penalize people for their credit, location, or age, a more individualized approach removes potentially discriminating categories that may penalize people for their financial status or the neighborhood they live in. Because of the vastness of big data and behavioral profiles, behavioral-economic profiles remain relatively stable over time.

It’s the data equivalent of truly knowing a person, understanding their personality, and quantifying their propensity for key risk behaviors.

Armed with this knowledge, insurance companies can get smarter about how to find and keep the right customers. A better understanding of risk informs better decisions in shaping their book of business. They can precisely prioritize prospects that think like their best customers, a vast improvement over targeting prospects with look-alike modeling.

If insurance companies get this good at knowing who potential customers are, they can identify unpriceable risks, such as fraud, litigation, and high cost claims at the beginning of the customer journey and use this information to augment the performance of their risk models. They then can tailor a customer’s journey, creating relevant experiences based on more accurate risk predictions and lifetime value.

Over the next decade, most insurance companies will abandon traditional, rudimentary approaches to risk segmentation based on sparse data points. Instead, they will be leveraging deep learning before underwriting a risk to make accurate risk propensity predictions at the beginning of the application process. Pinpoint is at the forefront of driving this transformation. The data modeling predictions that make the most successful tech companies so good at making assessments of who a person is, what they like, and how they buy will be the norm for insurers, not the exception. In this new competitive environment, insurers will be able to directly tie their data strategy to their loss ratios as they get better and better at targeting customers, segmenting risk, and tailoring their customer experience at an individualized level.

Pinpoint is already driving the insurance industry towards this future, and putting the predictive power of big tech in the hands of insurers to help them win. Ready to learn more? Reach out to me at info@pinpoint.ai.

This piece was originally posted via Pinpoint Predictive.

About Pinpoint Predictive

Pinpoint Predictive brings individualized intelligence to insurers by applying deep learning and behavioral economics to accurately predict the risk propensity of an individual insured without using financial-based credit scores.

Their AI-powered platform has revealed $100s of millions in bottom-line value to Top 10 insurers by quantifying key points of risk in areas including likeliness to file a claim, cancellation, likelihood to commit fraud, and litigiousness.

By utilizing their proprietary Thinkalike Scores in pre-eligibility and pre-renewal decisions, carriers are able to leverage the predictive power of deep learning and Pinpoint’s custom database representing thousands of sophisticated, privacy safe data points on approximately 260 million US adults in real time, resulting in significant improvement in their ability to target their most profitable insureds and improvement in their loss ratio.

Discover the powerful results of using individualized intelligence to boost profitability at https://pinpointpredictive.com/.